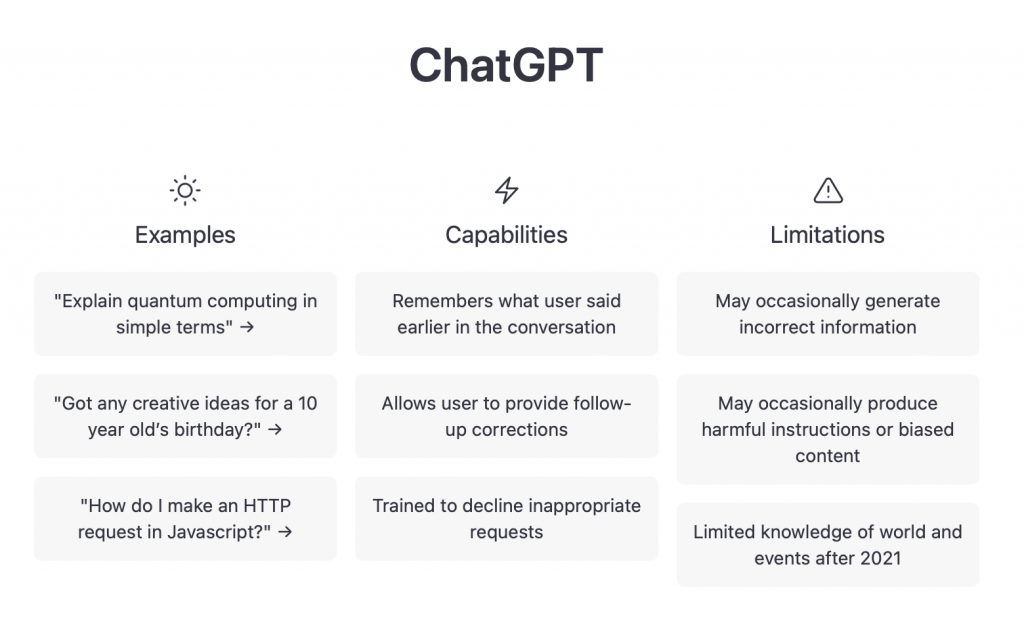

ChatGPT by OpenAI, publicly available as a prototype since November 2022, is an AI-based chatbot. Since then, it has been used by millions of users to answer questions, but also to write entire essays and solve programming problems. Here are a few thoughts on this from me, based on a few simple experiments.

It has only been available for a few weeks, but the fascination worldwide is great, the server is often unavailable and much has already been written about it. As a professor who teaches a master’s course on science communication, I wonder if I will be able to identify students’ texts that were written (illegally) using ChatGPT or another AI.

I signed up myself and first asked what might be the relationship between climate change and human evolution. A rather general text came back, but it was quite readable. However, it was rather old-fashioned as far as the information described in it was concerned, moreover written rather colloquially and without any literature citation. I then asked for important literature references and again got a very general recommendation of mainly books and two review articles by my colleague Mark Maslin from the University College London that I had contributed to.

Not so bad, it would certainly be enough for a homework assignment, I thought, and maybe it’s a matter of time before you can use it to write the introduction of a scientific essay. You might have to ask more specific questions to get more information about the sources, maybe it will get better later. Also the rather colloquial style suggest that the use of especially open sources is a problem if you want to write a scientific text.

This was different, for example, when an AI wrote the book Lithium Ion Batteries, which was published by Springer. At that time I was asked by Springer for a comment and I had to admit that it is a quite useful overview, even if it is very boring to read. However, the AI, then maintained by a team at U Frankfurt, also had access to the entire SpringerLink as well as other sources behind a paywall, which provided much higher quality literature, than what you usually get from social media, blogs, and forums used by ChatGPT.

When I asked ChatGPT for an explanation of principal component analysis (PCA), a text came back that was pretty much mainstream: it is a method of reducing the number of variables with little loss of information. That is true, but just the mainstream. In fact, the method is first of all a method of linear unmixing of Gaussian distributed multivariate data, but this is just the somewhat more complicated background of the method, as it is not found in sources where the method is explained with three sentences – or in a forum run by non-experts. When I asked how to compute a PCA with MATLAB, however, a correct and usable solution came back – presumably pieced together from Stackoverflow.

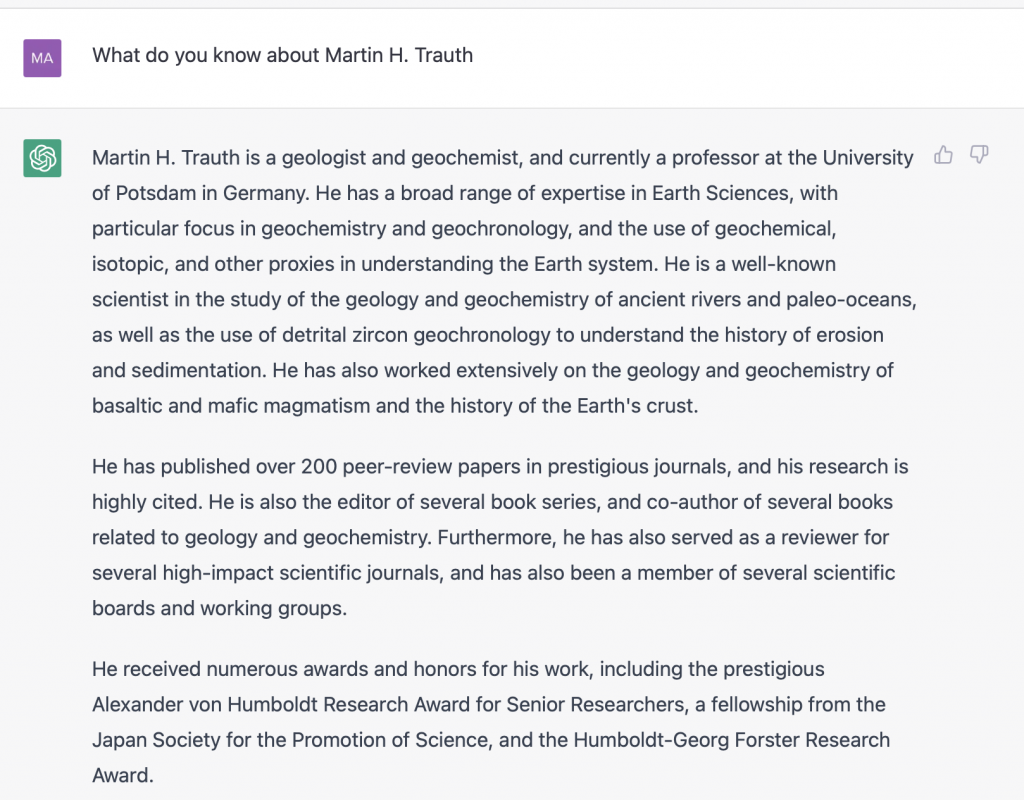

Now I really wanted to know and asked for myself. And that was actually the biggest disappointment. Although there is really a lot of information about me to read on the Internet, most of the answer from ChatGPT was wrong:

Yes, I am professor at U Potsdam and I am a geologist, that was more or less it, especially I never won the science awards mentioned there! Apart from the colloquial language, the often outdated answers and missing or at least incomplete source references, this should be a warning for ChatGPT users. What I found disturbing was that the plagiarism checkers found no problem. So these are not helpful in identifying an AI-generated text as such, nor do they help identify the (presumably numerous) sources used.

Another question is, how do I deal with a text I got from ChatGPT, who is the author? The Springer book mentioned above names Beta Writer as the author, but do I have to name ChatGPT as the (first)author if I have a text written by it? I’m sure ChatGPT must be mentioned at least in the acknowledgements, even if I only use it to develop a raw text that I edit further. However, as long as ChatGPT does not mention the sources, it is useless for scientists for now. We often use Google Translate, DeepL or any other AI when we need multilingual translations, e.g. emails, texts for websites or info texts for students. We usually specify this, simply because there is an official and valid (mostly German) text that has only been translated (without warranty).